Finally, I got some time to create a complete project tutorial on cifar-10 image classification. This was a project that I have done in my college. I will try to teach you how to do this project so that you can also do the same.

So, today we will create an image classifier using the keras library and the cifar-10 dataset. We will be using deep convolutional neural networks to do this project.

Even if you don’t know a lot about deep learning and machine learning, you can just follow along with me and I will try to explain every line of code that I write.

Our Action Plan for Today

Let’s do a quick overview of what we are going to do. We are going to create an image classifier that classifies every object in the cifar-10 dataset. For this project, we will be using the keras library, which is the easiest python library for doing deep learning projects.

We will create a convolutional neural network model and train it. Finally, we will test the output and evaluate the results that we get. Without any further ado, let’s do it.

Cifar 10 dataset

Cifar-10 is a standard computer vision dataset used for image recognition. It is a subset of the 80 million tiny images dataset and consists of 60,000 32×32 color images containing one of 10 object classes, with 6000 images per class. There are 50000 training images and 10000 test images.

The 10 object classes that are present in this dataset are airplanes, automobiles, birds, cats, deers, dogs, frogs, horses, ships, and trucks. All these classes are mutually exclusive. Note that there is no overlap between automobiles and trucks.

If you want to download this dataset manually, you can go to this link. But you do not need to do that for this project. In this project, we will install, extract, and split the dataset using the python code itself.

Keras Library

Keras is an open-source neural network library written in Python language. It is capable of running on top of TensorFlow and it makes things easier for us by doing that.

Keras is a lot simpler compared to tensorflow and other deep learning libraries. That is why we are using keras in this project.

Setup

Before you start creating the image classification model, make sure you have all the libraries and tools installed in your system. You can check out this article for setting up your environment for doing this project.

You should have keras, scikit-learn, numpy, and all the other necessary libraries installed in your system.

Importing the Required Libraries

Let’s start our project. I’m using a jupyter notebook for doing this project. I recommend you to use that. However, you can use any code editor you wish. So, open up a new project, and let’s start coding.

First of all, we will import all the required libraries to our project.

import numpy

from keras.models import Sequential

from keras.layers import Dense,Dropout,Flatten,Conv2D,MaxPooling2D

from keras.constraints import maxnorm

from keras.optimizers import SGD

from keras.utils import np_utils

from keras import backend as K

K.set_image_dim_ordering('tf')We are importing numpy and some packages from keras. Conv2D, Activation, MaxPooling2D, Dense, Flatten, and Dropout are different types of layers that are available in keras to build our model. Several layers are required for the model since we are using “deep” learning.

We should use the last line to set the image dimension ordering as ‘tf’. Usually, we use ‘tf’ for tensorflow and ‘th’ for theano.

Importing and Preparing the Cifar-10 Dataset

Now, we will be importing the cifar-10 dataset to our project.

from keras.datasets import cifar10

# let's load data

(X_train, y_train), (X_test, y_test) = cifar10.load_data()Keras library already consists of all the standard datasets. So, we are just importing cifar10 from the library itself. Then, we use the load_data() method to load the data into the given train and test directories.

Note that it is very easy to do all these things. Thanks to keras. We do not need to do all these things manually.

Normalization and One-Hot Encoding

Images are comprised of matrices of pixel values. Normally, pixels are expected to have values in the range of 0-255. We need to normalize these values to a range between 0 and 1.

#normalizing inputs from 0-255 to 0.0-1.0

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

X_train = X_train / 255.0

X_test = X_test / 255.0Now, we need to do a one-hot encoding of these data so that it could be provided to machine learning algorithms to do a better job in prediction. One-hot is a group of bits among which the legal combinations of values are only those with a single high bit and all the others low.

# one hot encode outputs

y_train = np_utils.to_categorical(y_train)

y_test = np_utils.to_categorical(y_test)

num_classes = y_test.shape[1]This will convert the matrices into binary matrices of width 10. We can’t just give the categorical data to the machine for processing. So, we need to do the above steps to make the data more processable for the machine.

Creating the Image Classification Model

Let’s initialize a convolutional neural network using the sequential model of keras.

# Create the model

model = Sequential()You can use either Sequential or Functional methods for creating keras models. We are using sequential here to build our model since it allows us to create the model layer-by-layer.

Now, we are going to add all the layers required for our convolutional neural network.

model.add(Conv2D(32, (3, 3), input_shape=(32,32,3), activation='relu', padding='same'))

model.add(Dropout(0.2))

model.add(Conv2D(32, (3, 3), activation='relu', padding='same'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Conv2D(64, (3, 3), activation='relu', padding='same'))

model.add(Dropout(0.2))

model.add(Conv2D(64, (3, 3), activation='relu', padding='same'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Conv2D(128, (3, 3), activation='relu', padding='same'))

model.add(Dropout(0.2))

model.add(Conv2D(128, (3, 3), activation='relu', padding='same'))

model.add(MaxPooling2D(pool_size=(2, 2)))

model.add(Flatten()) model.add(Dropout(0.2))

model.add(Dense(1024, activation='relu', kernel_constraint=maxnorm(3)))

model.add(Dropout(0.2))

model.add(Dense(512, activation='relu', kernel_constraint=maxnorm(3)))

model.add(Dropout(0.2))

model.add(Dense(num_classes, activation='softmax'))I will explain what each layer is.

Conv2D stands for a 2-dimensional convolutional layer.

Here, 32 is the number of filters needed. A filter is an array of numeric values. (3,3) is the size of the filter, which means 3 rows and 3 columns.

The input image is 32*32*3 size, that is, 32 height, 32 widths, and 3 refer to RGB values. Each of the numbers in this array (32,32,3) is given values from 0 to 255, which describes the pixel intensity at that point.

The output of this layer will be some feature maps. A feature map is a map that shows some specific features of the image.

We use the Dropout layer in our model to prevent overfitting. Overfitting is a modeling error that occurs to make an overly complex model. This layer drops out a random set of activations in that layer by setting them to zero as data flows through it.

MaxPooling layer is used for pooling. Pooling reduces the dimensionality of each feature map but retains the most important information. This helps to decrease the computational complexity of our network.

Flatten is used to convert the feature map to 1-dimension. We use the Dense function to initialize a fully connected network.

An activation function of a neuron defines the output of that neuron, given some input. This output is then used as input for the next neuron and so on until the desired solution is obtained.

We use ReLu and softmax activation functions in this model. ReLU replaces all the negative pixel values in the feature map with 0. Softmax takes as input a vector of K real numbers and normalizes it into a probability distribution consisting of K probabilities proportional to the exponentials of the input numbers.

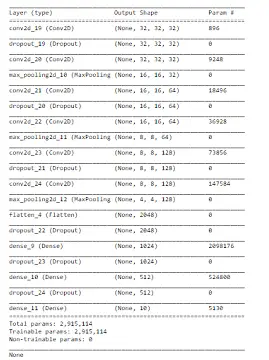

This is how our CNN model looks like.

You can see this by using the summary() method.

print(model.summary())Compiling the Model

We need to compile the CNN model before we train it. We can use the compile() method for this. Also, let’s define the learning rate for training.

# Compile model

lrate = 0.01

decay = lrate/epochs

sgd = SGD(lr=lrate, momentum=0.9, decay=decay, nesterov=False)

model.compile(loss='categorical_crossentropy', optimizer=sgd, metrics=['accuracy'])Categorical_crossentropy will compare the distribution of the predictions with the true distribution. SGD stands for stochastic gradient descent, which is a classical optimization algorithm.

We also set the metrics to accuracy so that we will get the details of the accuracy after training.

Training the Image Classification Model

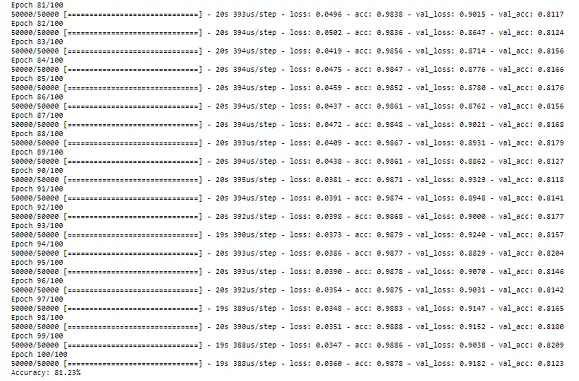

Now, it is time to train our model and see its accuracy. We can use the fit() method of keras to train the CNN.

model.fit(X_train, y_train, validation_data=(X_test, y_test), epochs=100, batch_size=32)

# Final evaluation of the model

scores = model.evaluate(X_test, y_test, verbose=0)

print("Accuracy: %.2f%%" % (scores[1]*100))Note that we put 100 as the epochs. So, it will take a long time to train the entire model. If you don’t want to wait for so long, then you can reduce the epochs to a low value. 100 epochs will be good for a highly accurate model.

As you can see from this image, I trained the model for 100 epochs to get an accuracy of 81.23%. It took a long time, even with a GPU-powered system.

Do not try to train your model using a normal laptop, because it will take a lot of power and resources and there is a high chance of occurring damage to your laptop.

Save the Model

As soon as you finish training your model, you should save it into a file so that you can use the same model in the future. You don’t want to train 100 epochs again. So, do not skip this step.

from keras.models import load_model

model.save('project_model.h5')This will create an HDF5 file with the name ‘project_model’ and extension ‘.h5’. Hierarchical Data Format (HDF) is a set of file formats (HDF4, HDF5) designed to store and organize large amounts of data.

Loading the Saved Model

If you want to use the saved model in the future, you can load that into your project using the following lines of code.

#loading the saved model

from keras.models import load_model

model = load_model('project_model.h5')Now, you can use this model to do whatever you want.

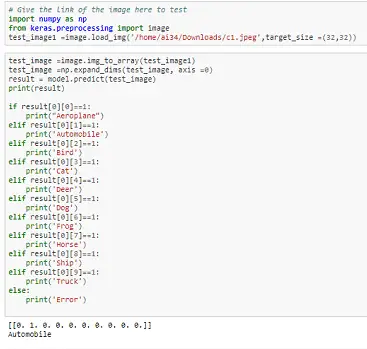

Testing the Model with some random input images

We have trained the model and are done with the project. Now, let’s test the model that we have made with some random images downloaded from the Internet.

We will see whether the model will predict everything correctly or not.

So, first of all, you need to load the saved model. If you have done that, then let’s give some input to the model.

import numpy as np

from keras.preprocessing import image

# Give the link of the image here to test

test_image1 =image.load_img('/home/ai34/Downloads/c1.jpeg',target_size =(32,32))test_image =image.img_to_array(test_image1)

test_image =np.expand_dims(test_image, axis =0)

result = model.predict(test_image)

print(result)

if result[0][0]==1:

print("Aeroplane")

elif result[0][1]==1:

print('Automobile')

elif result[0][2]==1:

print('Bird')

elif result[0][3]==1:

print('Cat')

elif result[0][4]==1:

print('Deer')

elif result[0][5]==1:

print('Dog')

elif result[0][6]==1:

print('Frog')

elif result[0][7]==1:

print('Horse')

elif result[0][8]==1:

print('Ship')

elif result[0][9]==1:

print('Truck')

else:

print('Error')I went to a website called pexels.com, where you can find a lot of high-quality images. I downloaded one image each for all the object classes in Cifar-10.

I tried all those 10 images as inputs and the model was able to predict 8 of them correctly.

Here is a screenshot of one prediction (when I gave an image of a car as the input).

You can see that it predicted the class correctly. The model did a pretty good job of predicting the input images.

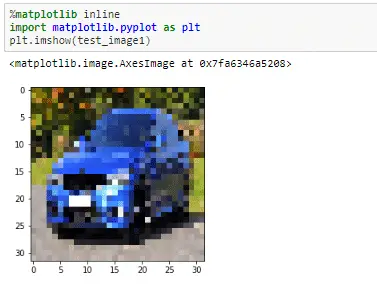

If you want the system to display the input image that you have given, you can use the following lines of code.

%matplotlib inline

import matplotlib.pyplot as plt

plt.imshow(test_image1)It will display the image something like this:

That’s it. We are done with the image classification project.

Conclusion

So, let’s wrap up this tutorial very quickly.

In this tutorial, we created an image classifier using deep learning to classify 10 objects in the cifar-10 dataset. We used the keras library of Python for the implementation of this project.

We created a CNN model with several layers and trained the model. Finally, we tested the classification model by giving some random images downloaded from the Internet.

Our image classifier predicted the results with an accuracy of 81.23 percentage. It even did a pretty good job of classifying the random input images that we have given.

Did you face any problems or errors while doing this project? If you have any doubts or queries, feel free to let me know in the comments down below.

If you are passionate and ready to do more projects, then check out my article on 21 machine learning project ideas.

I created this tutorial to help you to do more hands-on machine learning projects rather than learning all the boring theories. I would appreciate it if you would be willing to share it. It will encourage me to create more useful tutorials like this.

Keep learning. Cheers!

16 thoughts on “Cifar-10 Image Classification Using Keras”

Leave a Reply

Recent Posts

Modular programming is a software design technique that emphasizes separating the functionality of a program into independent, interchangeable modules. In this tutorial, let's understand what modular...

While Flask provides the essentials to get a web application up and running, it doesn't force anything upon the developer. This means that many features aren't included in the core framework....

Good tutorial…. Really help full…

Thanks????

Can you email me test image dataset?

Do you mean the input validation images? You can give any image as input for validation.

I downloaded some random images from the Internet for each class. You can find high-quality images from Pexels.com. Give it as input and see what the model predicts.

I am trying to run this code in my system but I am facing some value error while training the model.

ValueError: Error when checking target: expected dense_6 to have 2 dimensions, but got array with shape (50000, 10, 2, 2, 2, 2, 2, 2, 2).

The above is the shape of y_train. Could you please tell why am I facing this error?

I’m not net getting any errors using this code. I’m exactly not sure why you get an error. You can try sparse_categorical_crossentropy to do one-hot encoding and reshaping y_train = y_train.reshape((-1, 1)).

I suggest you go through this discussion: https://github.com/keras-team/keras/issues/3009

How can we use our own dataset instead of cifar 10 dataset

You can find a dataset from Kaggle if you want to use some other datasets. If you want to use your own images, create separate test and train directories for all the classes. Then feed that data into the model using the flow_from_directory method. If you want to use multiple classes, you can do that as well. Create the dataset by yourself for each class and feed that into the model to train it.

why I’m getting this type of error:

AttributeError Traceback (most recent call last)

in

—-> 1 train_set = train_set.astype(‘float32’)

2 test_set = test_set.astype(‘float32’)

3 train_set = train_set / 255.0

4 test_set = test_set / 255.0

AttributeError: ‘DirectoryIterator’ object has no attribute ‘astype’

I’m not sure why you’re getting an error. I didn’t see any errors like this when I did this project. Do some research on your end. Programming is all about problem-solving.

How many epoch model can I train with my laptop without affecting the laptop?

It depends on the laptop specs and its capabilities. It would be better if you have a graphics card, a good processor, and memory.

I need the weights h5 file I cannot train for this long. can any one give me the weights?

Thanks.

Excellent teaching, Thanks!

Glad I could help!

Hi ashwin,

I’m trying to follow your tutorial, but I’m running into an error in the following part of your code:

lrate = 0.01

decay = lrate/epochs

sgd = SGD(lr=lrate, momentum=0.9, decay=decay, nesterov=False)

model.compile(loss=’categorical_crossentropy’, optimizer=sgd, metrics=[‘accuracy’])

The Error:

NameError: name ‘epochs’ is not defined

I don’t see you defining epochs previously in your tutorial, what should epochs be defined as? epochs = 0? or should epochs be defined as epochs = 100 before training the model?